In the last weeks I worked a lot with java netty sockets and run in half-open TCP connections. This mean the connection is only open on the client site but the server closed the connection.

I could not reproduce the issue in my local environment, refactor on the application source didn’t help me and so I burned down a lot of hours to get a stable connection to the socket server without success.

After a while we go down to TCP Level to get more information what happened.

The environment with this issue was on AWS in a private network with a NAT gateway.

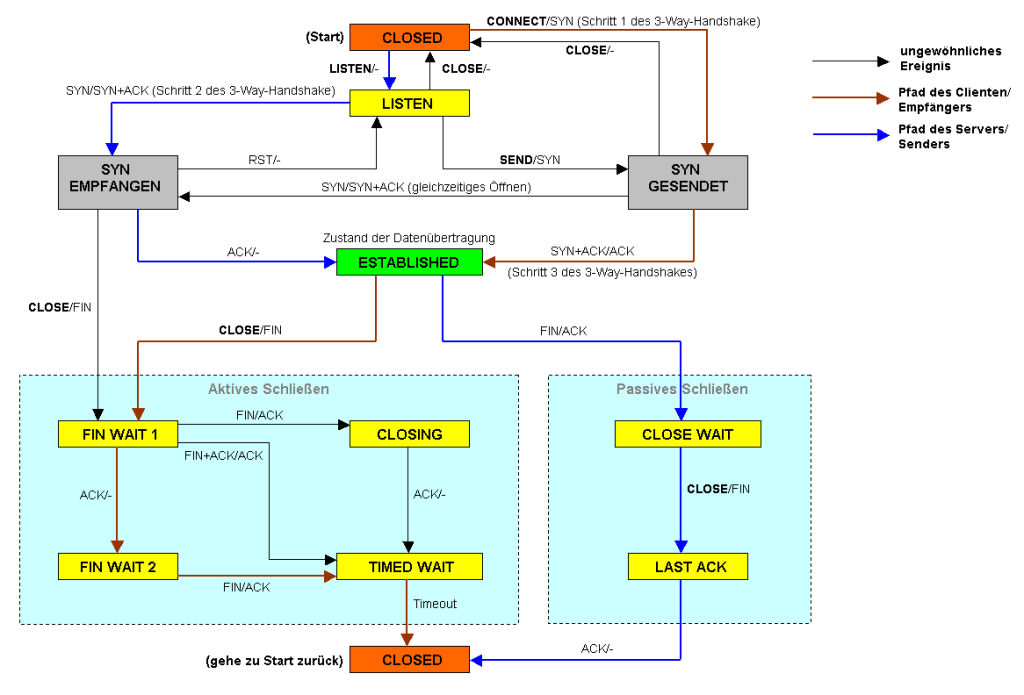

The Socket Server send a connection timeout after 15 seconds with a TCP-FIN. This goes throw the AWS NAT-gateway and send a TCP-RST to the application server. In this case the application server believes the connection is established, but it isn’t.

It could be fixed with change the NAT Gateway to a NAT Instance on AWS which will send also the TCP-FIN or add the application server connected directly to the public network.

Add the end there was also some other issues with the NAT Gateway and long-time timeouts on Web-Socket level.

Some Links for more Information are here:

Hinterlasse einen Kommentar